Rohit Philip, Ph.D.COMPUTER VISION ENGINEER Postdoctoral Research Associate - I |

| Biography Research Publications Coursework CV/Contact |

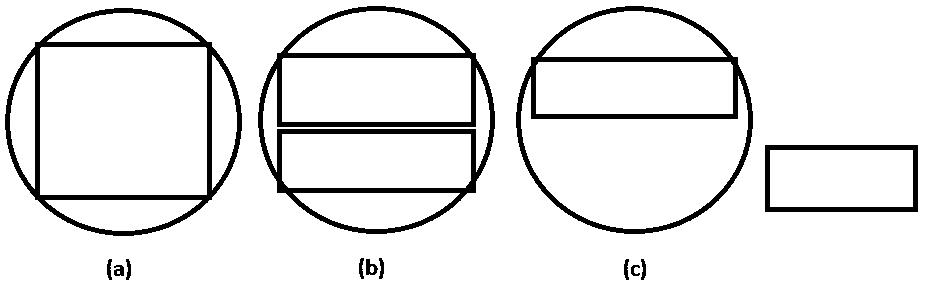

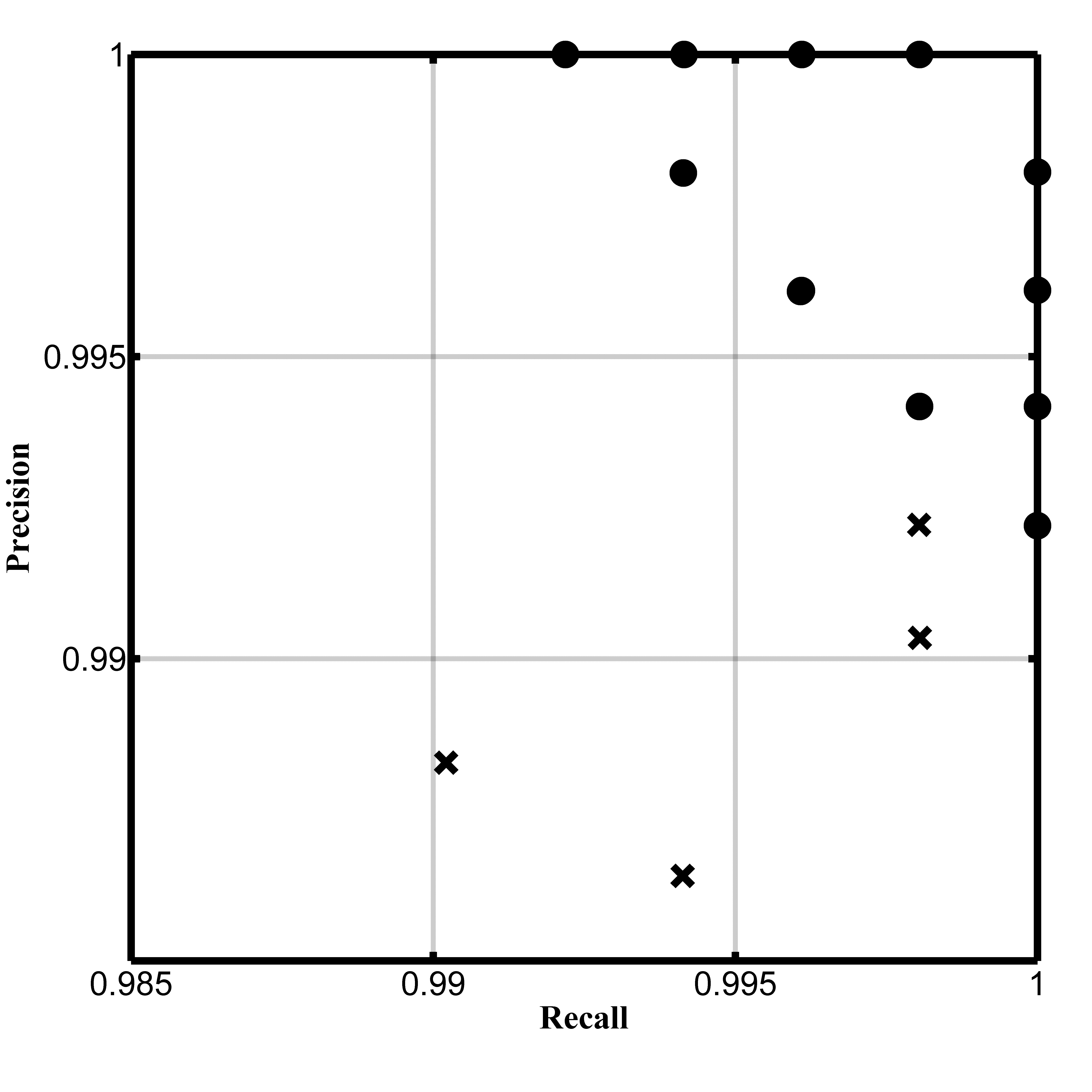

Classical detection theory has long used traditional measures such as precision, recall, and F-measures to evaluate the quality of detection results;

either during performance analysis of competing detection algorithms, or parameter tuning to obtain the set of parameters that produce optimal detection results during development.

While impeccable at pixel level, or in cases of perfect detection at object level, i.e., a one-to-one correspondence between objects produced by the detection algorithm,

and objects present in the ground truth (true positives), or no correspondence at all between objects (false positives, and false negatives),

cases of many-to-one correspondence are completely ignored. To elaborate: situations where a ground truth object is split into multiple detected objects (splits), or

multiple ground truth objects are represented by a single detection (merges) are not included in the quantification, resulting in incorrect evaluation.

These situations arise commonly in practice, either as an end result of an imperfect detection algorithm, or as intermediate results of parameter tuning,

rendering it impossible to objectively evaluate performance. At its core, the problem consists of precisely determining how all the detected objects (and misses)

are classified based on the underlying ground truth. This paper precisely defines the relationship between the complete set of detected objects, and the underlying ground truth objects.

Once a precise definition is established, we develop two new precision measures, and two new recall measures, resulting in four new F and G measures,

all of which reduce to their classical detection theory counterparts in the case of perfect detection (no splits, and/or merges).

We introduce the shared positive detection - shared positive truth (SPD-SPT) curve, and evaluate the detection performance of eight object detection algorithms using our novel kallynodetection framework.

Classical detection theory has long used traditional measures such as precision, recall, F measure, and G measure to

evaluate the quality of detection results. Such evaluation can be done for performance analysis of competing detection algorithms, or

for parameter tuning to optimize parameters based on training data. The performance analysis can be done at the sample level (e.g.,

pixel-by-pixel) or at the object level (e.g., a set of connected pixels are grouped together into one object). Conventional performance

measures are effective when applied at the sample level or when applied at the object level with simple detection outcomes. In many

cases, however, object-level detection often results in hybrid detections such as a single ground-truth object split into multiple detected

objects or multiple ground-truth objects merged into a single detected object and combinations thereof. In such cases, the conventional

performance measures are ineffective. We propose a generalized framework for evaluating object detection algorithms, which involves

two new precision measures and two new recall measures, resulting in four new F and G measures, all of which reduce to their

classical detection theory counterparts in cases with simple detection outcomes (no split detections or merged truths). We introduce

the shared positive detection vs. shared positive truth (SPD-SPT) curve, and evaluate the detection performance of eight object

detection algorithms using our generalized framework.

In preparation. To be updated soon.

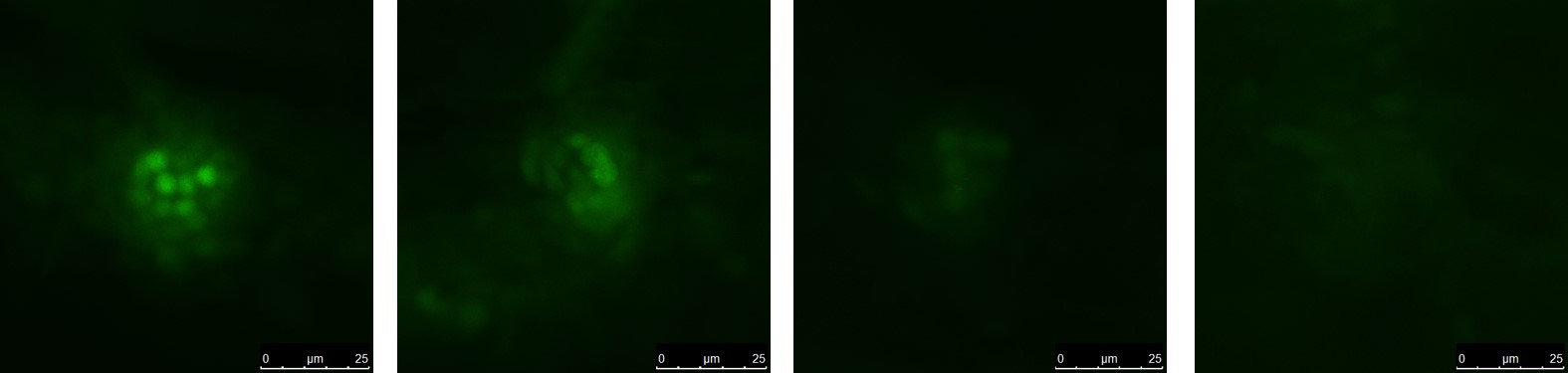

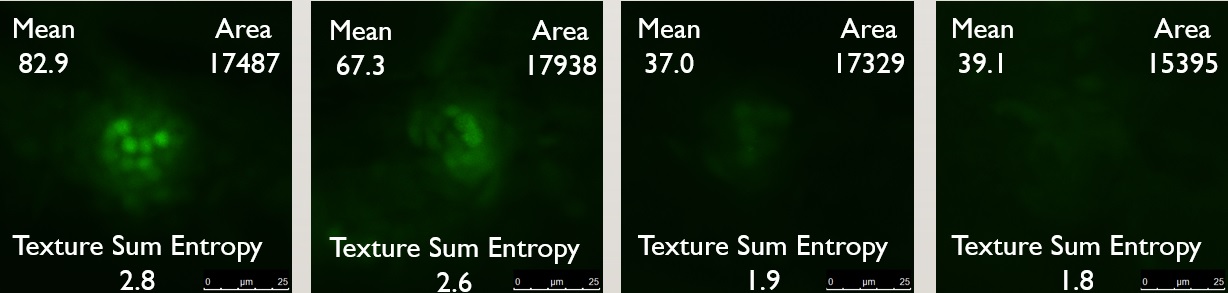

We developed a machine learning solution to automaticallly determine the damage score of confocal microscopy images of zebrafish neuromast after exposure to ototoxins. Raw images showing damage

scores from 0 (least damage) to 3 (most damage) are shown below.

The first step is segmenting the zebrafish neuromast from the surrounding cell structure. Since all the information was present in the green channel of the image, segmentation was accomplished by

simple thresholding (all pixels with intensity less than the 93rd percentile were attenuated; this corresponded to the best Dice score with respect to manually obtained ground truth segmentations).

We then extracted 99 features including size, shape, intensity, texture etc., three of which are shown below.

Cross validation was performed to reduce the feature set, based on a training data set of manually annotated damage scores.

We then cascaded four individual support vector machine classifiers (SVM) to determine hyperplanes in the reduced feature space that best separate the binary classes corresponding to each damage score.

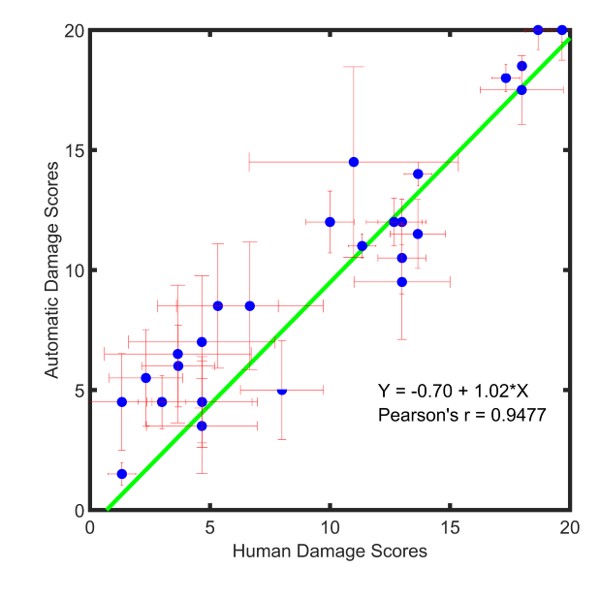

A Bland Altman analysis to compare the two damage scoring methods (manual versus automatic) was performed, results of which are presented below.

R.C. Philip, J.J. Rodríguez, M. Niihori, R.H. Francis, J.A. Mudery, J.S. Caskey, E. Krupinski, and A. Jacob,

"Automated High-Throughput Damage Scoring of Zebrafish Lateral Line Hair Cells After Ototoxin Exposure"

Zebrafish 2018;15(2):145-155.

R.C. Philip, S.R.S.P. Malladi, M. Niihori, A. Jacob and J.J. Rodríguez,

"Performance of Supervised Classifiers for Damage Scoring of Zebrafish Neuromasts,"

2018 IEEE Southwest Symp. on Image Analysis and Interpretation,

Apr. 2018, Las Vegas, NV, pp. 113-116.

Automating the behavioral assay was successful, and we achieved a high-throughput with minimal error. However, we noticed that sometimes damaged fish were stuck to the bottom of the tank,

oriented against the flow contributing to a higher rheotaxis index than expected. We improve the behavioral assay by tracking individual zebrafish from frame to frame and use this motion information,

to obtain a complete picture of zebrafish behavior. We associate zebrafish from frame to frame by using optical flow to determine where each detected zebrafish is headed. This frame to frame

association sometimes results in broken track fragments which are then connected at a later point to produce complete tracking information.

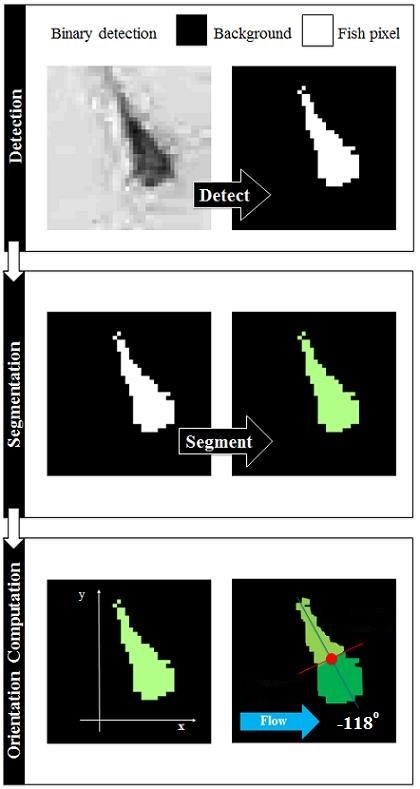

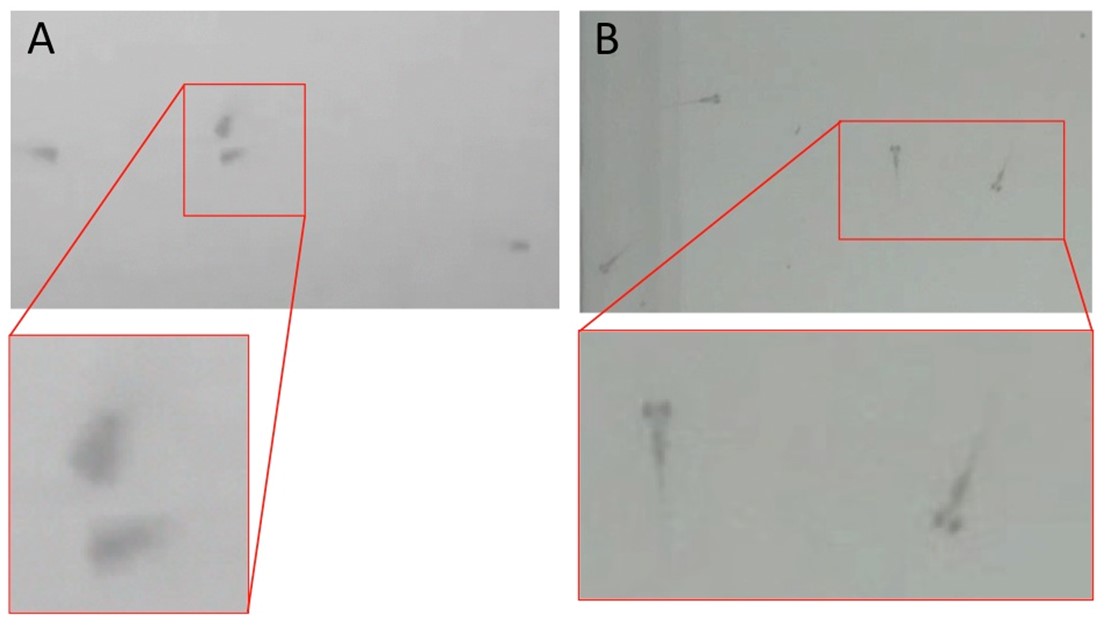

We built a zebrafish detection module to detect all the zebrafish in an individual video frame. The central premise of the detection algorithm is that the zebrafish are darker than the surrounding water.

Classical image processing techniques that detect dark objects of interest were surveyed, analyzed, and implemented. The user was given a choice of six detection algorithms: 1) Bottom Hat Transformation,

2) Kernel Density Estimation, 3) Multiscale Wavelets, 4) Local Comparison, 5) Locally Enhancing Filtering, and 6) Band Pass Filtering. Each of these algorithms were implemented, and provide the user

with different capabilities in terms of accuracy, speed, etc. Detection also resulted in good segmentation (grouping of detected pixels into an object of interest).

The results of the detection algorithm module (using the Bottom Hat Transformation) is quantified in terms of precision, recall, and F-scores. Precision and recall of the detection algorithm with respect

to ground truth obtained manually from four human observers (X) is plotted against precision-recall values of each observer against the others (dots) in a scatterplot to indicate that the detection algorithm

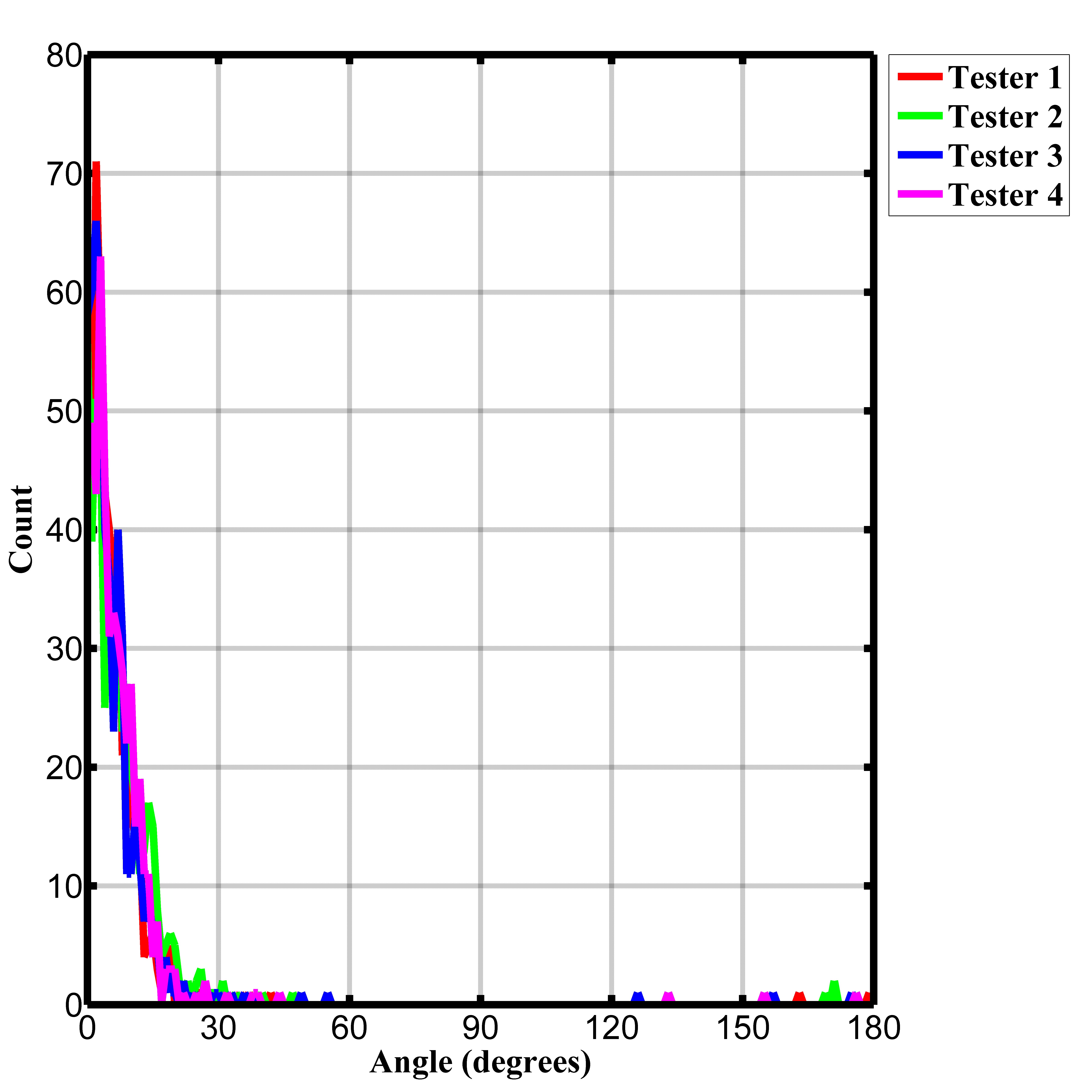

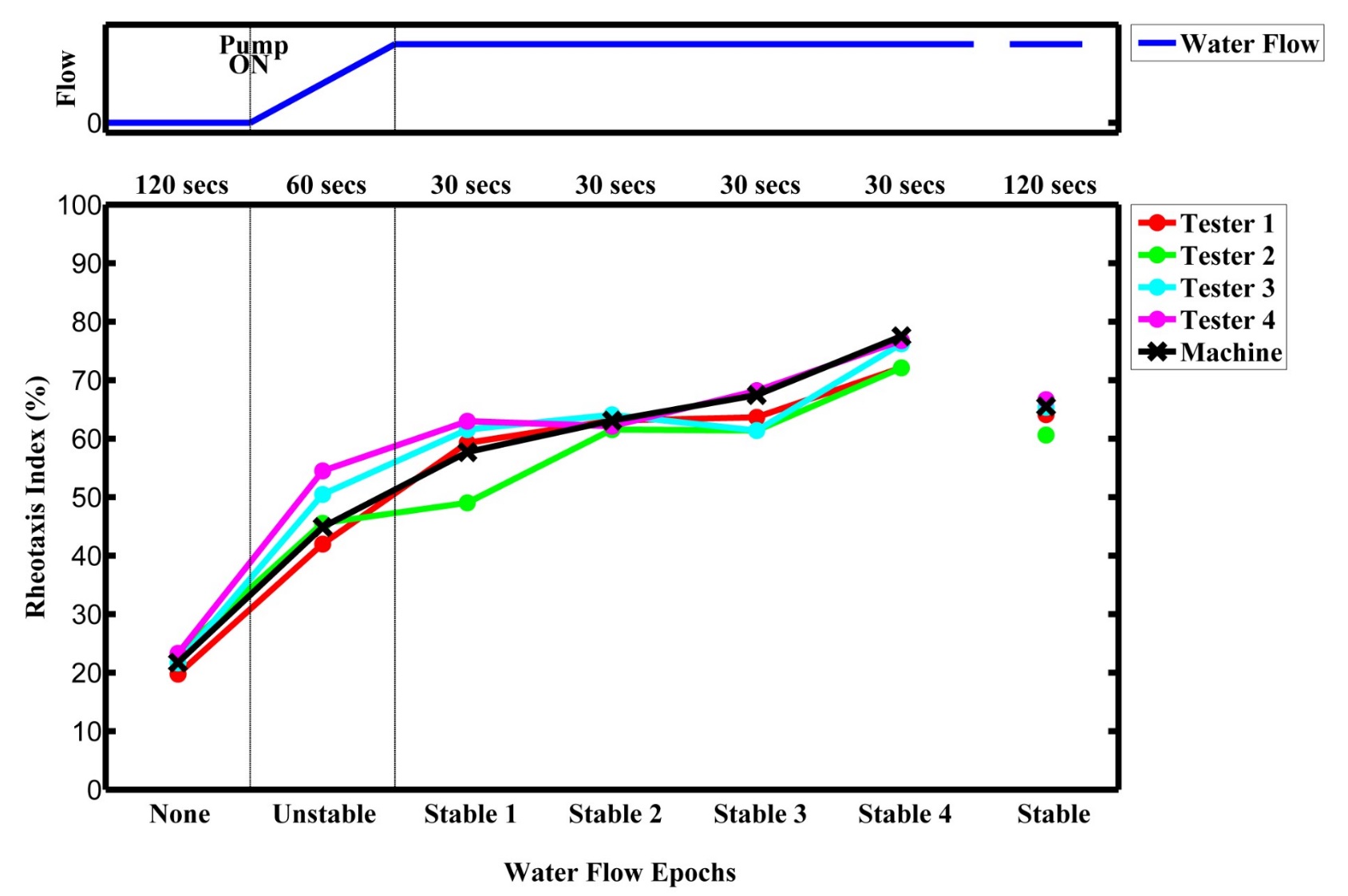

achieves a high degree of precision and recall, and also performs comparably with inter-operator variance. The performance of the principal component orientation determination module is plotted

as a distribution of mean absolute angular error compared with ground truth orientations obtained from each of the four human observers. Finally, the RI at each flow epoch

(flow off, flow stabilization, and flow on) was plotted to determine the performance of our high-throughput, fully automated behavioral assay.

Zebrafish animal models lend themselves to behavioral assays that can facilitate rapid screening of ototoxic, otoprotective, and otoregenerative drugs.

Structurally similar to human inner ear hair cells, the mechanosensory hair cells on their lateral line allow the zebrafish to sense water flow and orient head-to-current in a behavior called rheotaxis.

This rheotaxis behavior deteriorates in a dose-dependent manner with increased exposure to the ototoxin cisplatin, thereby establishing itself as an excellent biomarker for anatomic damage to

lateral line hair cells. Building on work by our group and others, we have built a new, fully-automated high-throughput behavioral assay system that uses automated image analysis techniques

to quantify rheotaxis behavior. This novel system consists of a custom-designed swimming apparatus and imaging system consisting of network-controlled Raspberry Pi microcomputers

capturing infrared video. Automated analysis techniques detect individual zebrafish, compute their orientation, and quantify the rheotaxis behavior of a zebrafish test population,

producing a powerful, high-throughput behavioral assay. Using our fully-automated biological assay to test a standardized ototoxic dose of cisplatin against varying doses of compounds

that protect or regenerate hair cells may facilitate rapid translation of candidate drugs (currently no FDA approved treatment exists) into preclinical mammalian models of hearing loss.

@article {Todd17,

author = {Todd, Douglas W. and Philip, Rohit C. and Niihori, Maki and Ringle, Ryan A. and Coyle, Kelsey R. and Zehri, Sobia F. and Zabala, Leanne and Mudery, Jordan A. and Francis, Ross H. and Rodriguez, Jeffrey J. and Jacob, Abraham}, title = {A Fully Automated High-Throughput Zebrafish Behavioral Ototoxicity Assay}, journal = {Zebrafish}, volume = {14}, number = {4}, publisher = {Mary Ann Liebert, Inc.}, issn = {}, doi = {10.1089/zeb.2016.1412}, pages = {331--342}, year = {2017}, }

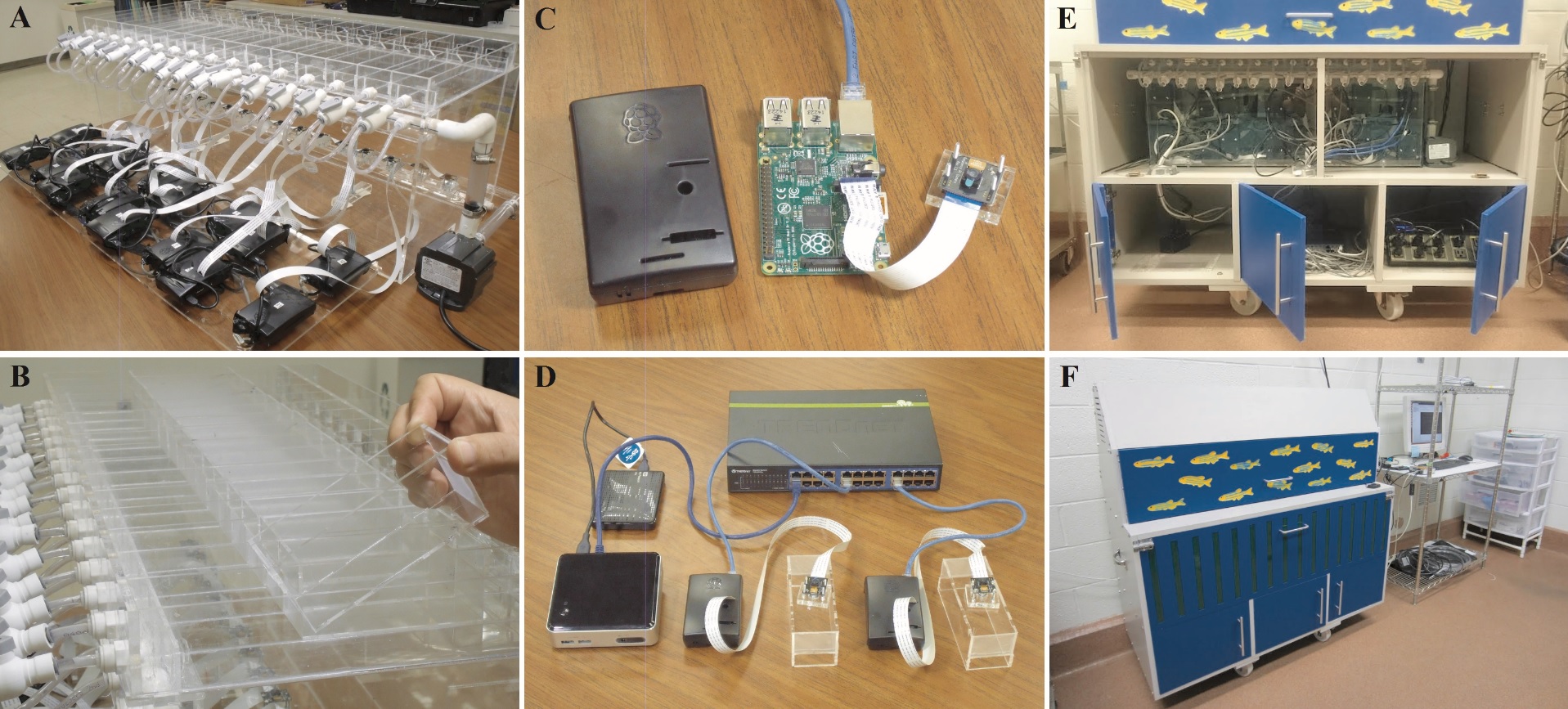

We built a swimming apparatus consisting of a swimming tank (A) comprising sixteen swimming lanes. We also built removable swimming chambers (B) that would house zebrafish test populations, facilitating

quick turnaround time between experiments. We then built a low cost imaging system using a network of interconnected dedicated Raspberry Pi

microcomputers (C) with NoIR infrared cameras for each lane, which were then connected via a zebrafish behavioral assay intranet

to a control computer (D). The entire system was housed in a cabinet (E & F) built by an external contractor.

Zebrafish animal models lend themselves to behavioral assays that can facilitate rapid screening of ototoxic, otoprotective, and otoregenerative drugs.

Structurally similar to human inner ear hair cells, the mechanosensory hair cells on their lateral line allow the zebrafish to sense water flow and orient head-to-current in a behavior called rheotaxis.

This rheotaxis behavior deteriorates in a dose-dependent manner with increased exposure to the ototoxin cisplatin, thereby establishing itself as an excellent biomarker for anatomic damage to

lateral line hair cells. Building on work by our group and others, we have built a new, fully-automated high-throughput behavioral assay system that uses automated image analysis techniques

to quantify rheotaxis behavior. This novel system consists of a custom-designed swimming apparatus and imaging system consisting of network-controlled Raspberry Pi microcomputers

capturing infrared video. Automated analysis techniques detect individual zebrafish, compute their orientation, and quantify the rheotaxis behavior of a zebrafish test population,

producing a powerful, high-throughput behavioral assay. Using our fully-automated biological assay to test a standardized ototoxic dose of cisplatin against varying doses of compounds

that protect or regenerate hair cells may facilitate rapid translation of candidate drugs (currently no FDA approved treatment exists) into preclinical mammalian models of hearing loss.

@article {Todd17,

author = {Todd, Douglas W. and Philip, Rohit C. and Niihori, Maki and Ringle, Ryan A. and Coyle, Kelsey R. and Zehri, Sobia F. and Zabala, Leanne and Mudery, Jordan A. and Francis, Ross H. and Rodriguez, Jeffrey J. and Jacob, Abraham}, title = {A Fully Automated High-Throughput Zebrafish Behavioral Ototoxicity Assay}, journal = {Zebrafish}, volume = {14}, number = {4}, publisher = {Mary Ann Liebert, Inc.}, issn = {}, doi = {10.1089/zeb.2016.1412}, pages = {331--342}, year = {2017}, }

A crucial element of any video is a moving object - something that we can clearly perceive to have changed

its position with time. An immediate intellectual reaction to this observed motion is to determine how much

the object has moved from one frame to the next, with respect to its surroundings.

Once the motion of the object (and consequently its speed and direction of motion) is estimated, the logical

progression is to track it from frame to frame, until the object leaves the area of interest or until the end of the video.

Object tracking is currently one of the most active research areas in computer vision.

In this paper we compare and analyze the performance of six recent object tracking algorithms on a raw,

low resolution, unregistered, interlaced aerial video of multiple cars moving on a roadway.

This dataset comprising 50 frames of video offers a wide variety of challenges related to imaging issues

such as low resolution, unregistered frames, camera motion, and interlaced video, as well as object detection

problems such as low contrast, background clutter, object occlusion and varying degrees of motion.

We present the performance of these algorithms in terms of both overlap accuracy and the Euclidean distance

of the center pixel returned by the tracking algorithm from the ground truth.

@INPROCEEDINGS{6806041,

author={Philip, R.C. and Ram, S. and Xin Gao and Rodriguez, J.J.}, booktitle={Image Analysis and Interpretation (SSIAI), 2014 IEEE Southwest Symposium on}, title={A comparison of tracking algorithm performance for objects in wide area imagery}, year={2014}, month={April}, pages={109-112}, keywords={computer vision;image registration;image resolution;object tracking;road vehicles;traffic engineering computing;video signal processing;Euclidean distance;computer vision;interlaced aerial video;low resolution video;multiple cars;object tracking algorithm;roadway;unregistered video;wide area imagery;Accuracy;Clutter;Computer vision;Image resolution;Object tracking;Robustness;Object tracking;localization error;overlap area;partial occlusion;wide area imagery}, doi={10.1109/SSIAI.2014.6806041},}

Accurate segmentation of breast cancer tissue

is imperative to aid the oncologist in researching the effects of the tumor on the blood vasculature surrounding it

as well as determining whether angiogensis or apoptosis is occurring.

This paper proposes a new automated system for segmentation of breast cancer tissue.

The segmentation algorithm involves a principal component region growing scheme for high-resolution images.

The number of candidate seed pixels is extremely large due to the high resolution.

The main focus of this paper is to present a multi-resolution scheme for accurate selection of seed pixels

to be presented as inputs to the region growing segmentation algorithm.

The system is tested for accuracy, and the efficiency is measured in terms of percentage reduction in

number of seed pixels, as well as accuracy of the segmentation results.

@INPROCEEDINGS{4712035,

author={Philip, R.C. and Rodríguez, J.J. and Gillies, R.J.}, booktitle={Image Processing, 2008. ICIP 2008. 15th IEEE International Conference on}, title={Seed pruning using a multi-resolution approach for automated segmentation of breast cancer tissue}, year={2008}, month={Oct}, pages={1436-1439}, keywords={biological tissues;cancer;image resolution;image segmentation;medical image processing;principal component analysis;automated segmentation;breast cancer tissue segmentation;multiresolution approach;principal component region growing scheme;region growing segmentation algorithm;seed pruning;Breast cancer;KL transform;region growing;seed pixels}, doi={10.1109/ICIP.2008.4712035}, ISSN={1522-4880},} |

|

|

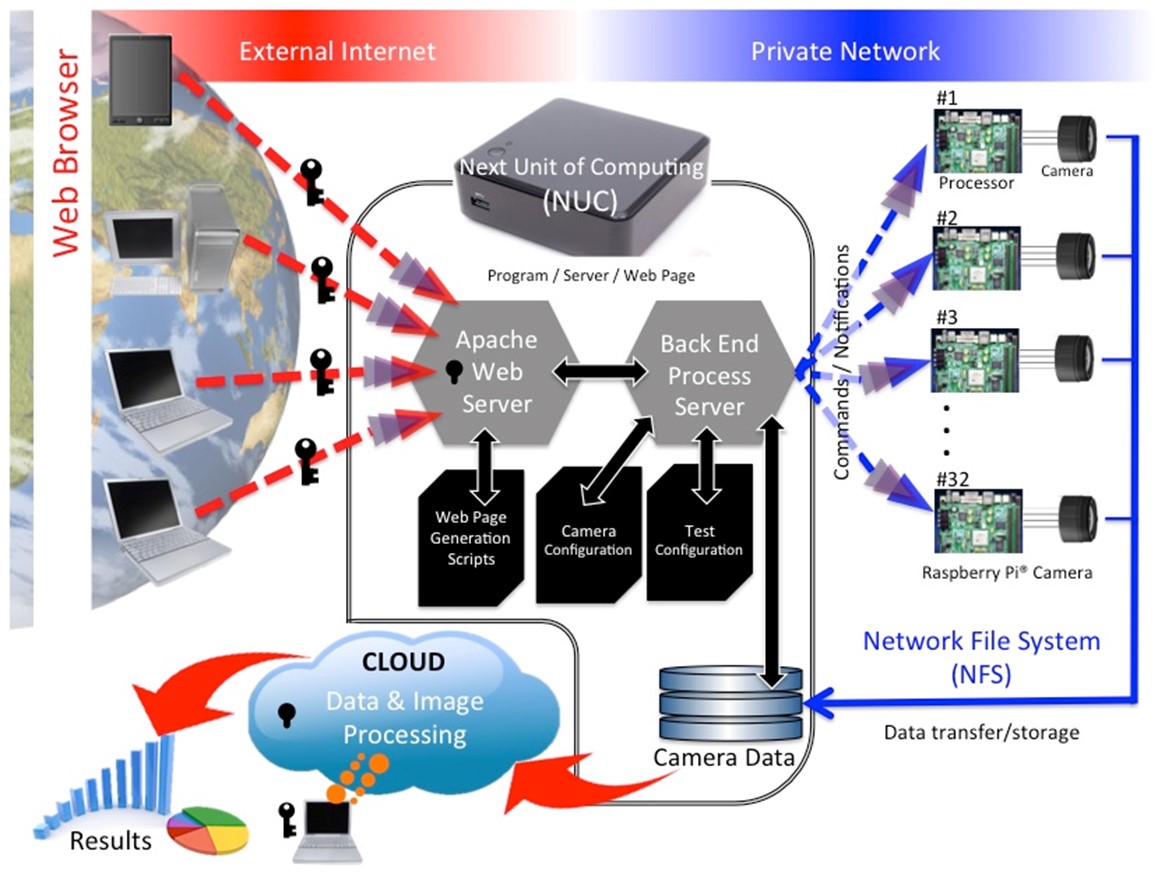

The control system was built on an Intel NUC computer operating on Ubuntu 16.04 LTS and running a custom network configuration to serve the behavioral assay intranet and communicate with the individual Raspberry Pis.

The webpage is served using an Apache web server running CGI scripts, and perl modules to access configuration information, and RI results from xml files. SSH, and samba share access on the Pis are also available.

The control system was built on an Intel NUC computer operating on Ubuntu 16.04 LTS and running a custom network configuration to serve the behavioral assay intranet and communicate with the individual Raspberry Pis.

The webpage is served using an Apache web server running CGI scripts, and perl modules to access configuration information, and RI results from xml files. SSH, and samba share access on the Pis are also available.