Siyang Cao

My research is motived by the fast development of radar and sensor technology. I am interested in radar signal processing, multimodal sensor fusion, machine learning with an emphasis on sensors, and radar imaging.

Current areas of interest include:

Sensor fusion across multi-modal sensors (e.g., radar, camera, and LiDAR)

Complex target signatures using mmWave radar (e.g., human pose estimation and fall detection)

Advanced radar imaging techniques

Radar interference mitigation strategies

Machine Learning based sensor detector

Radar Detector based on Transformer

|

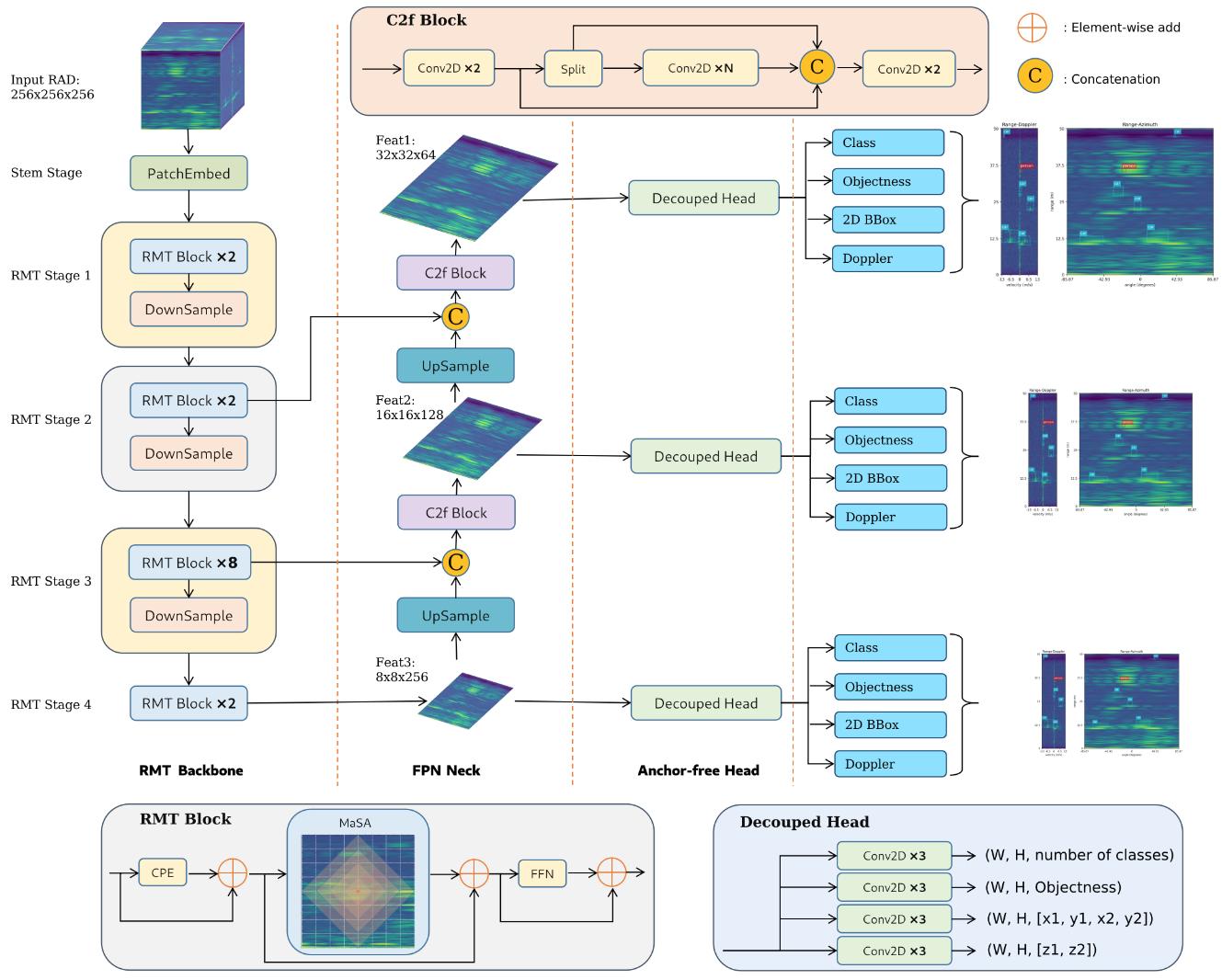

Despite significant advancements in environment perception capabilities for autonomous driving and intelligent robotics, cameras and LiDARs remain notoriously unreliable in low-light conditions and adverse weather, which limits their effectiveness. Radar serves as a reliable and low-cost sensor that can effectively complement these limitations. However, radar-based object detection has been underexplored due to the inherent weaknesses of radar data, such as low resolution, high noise, and lack of visual information. In this work, we present TransRAD, a 3D radar object detection model designed to address these challenges by leveraging the Retentive Vision Transformer (RMT) to more effectively learn features from information-dense radar Range-Azimuth-Doppler (RAD) data. Our approach leverages the Retentive Manhattan Self-Attention (MaSA) mechanism provided by RMT to incorporate explicit spatial priors, thereby enabling more accurate alignment with the spatial saliency characteristics of radar targets in RAD data and achieving precise 3D radar detection across Range-Azimuth-Doppler dimensions. Furthermore, we propose Location-Aware NMS to effectively mitigate the common issue of duplicate bounding boxes in deep radar object detection. The experimental results demonstrate that TransRAD outperforms state-of-the-art methods in both 2D and 3D radar detection tasks, achieving higher accuracy, faster inference speed, and reduced computational complexity. Code is available at https://github.com/radar-lab/TransRAD Papers

|

Human Pose Estimation

|

To enhance human pose estimation capabilities, we proposed mmPose-FK, a novel mmWave radar-based pose estimation method that utilizes a dynamic forward kinematics (FK) approach. This method is designed to overcome the challenges of low resolution, specularity, and noise artifacts common to mmWave radars, which often result in unstable joint poses. By integrating FK into a deep learning model, mmPose-FK provides more stable and accurate pose estimation, improving the reliability of radar-based human skeletal tracking. Papers

|

Fall Detection System

|

In response to the growing elderly population and associated fall risks, we developed a radar-based fall detection system using mmWave radar and machine learning to detect falls in real time. Our system employs deep learning models like CNNs and RNNs to accurately monitor falls by learning complex features from radar data. Our survey emphasizes the effectiveness of Micro-Doppler, Range-Doppler, and Range-Doppler-Angle techniques in ensuring privacy and detecting falls through obstructions, while also addressing challenges such as unpredictability and the lack of realistic fall data. Papers

|

Multimodal Sensor Calibration - Target based

|

Advances in autonomous driving are inseparable from sensor fusion. Heterogeneous sensors are widely used for sensor fusion due to their complementary properties, with radar and camera being the most equipped sensors. Intrinsic and extrinsic calibration are essential steps in sensor fusion. The extrinsic calibration, independent of the sensor's own parameters, and performed after the sensors are installed, greatly determines the accuracy of sensor fusion. Many target-based methods require cumbersome operating procedures and well-designed experimental conditions, making them extremely challenging. To this end, we propose a flexible, easy-to-reproduce and accurate method for extrinsic calibration of 3D radar and camera. The proposed method does not require a specially designed calibration environment, and instead places a single corner reflector (CR) on the ground to iteratively collect radar and camera data simultaneously using Robot Operating System (ROS), and obtain radar-camera point correspondences based on their timestamps, and then use these point correspondences as input to solve the perspective-n-point (PnP) problem, and finally get the extrinsic calibration matrix. Also, RANSAC is used for robustness and the Levenberg-Marquardt (LM) nonlinear optimization algorithm is used for accuracy. Papers

|

Multimodal Sensor Calibration - Targetless

|

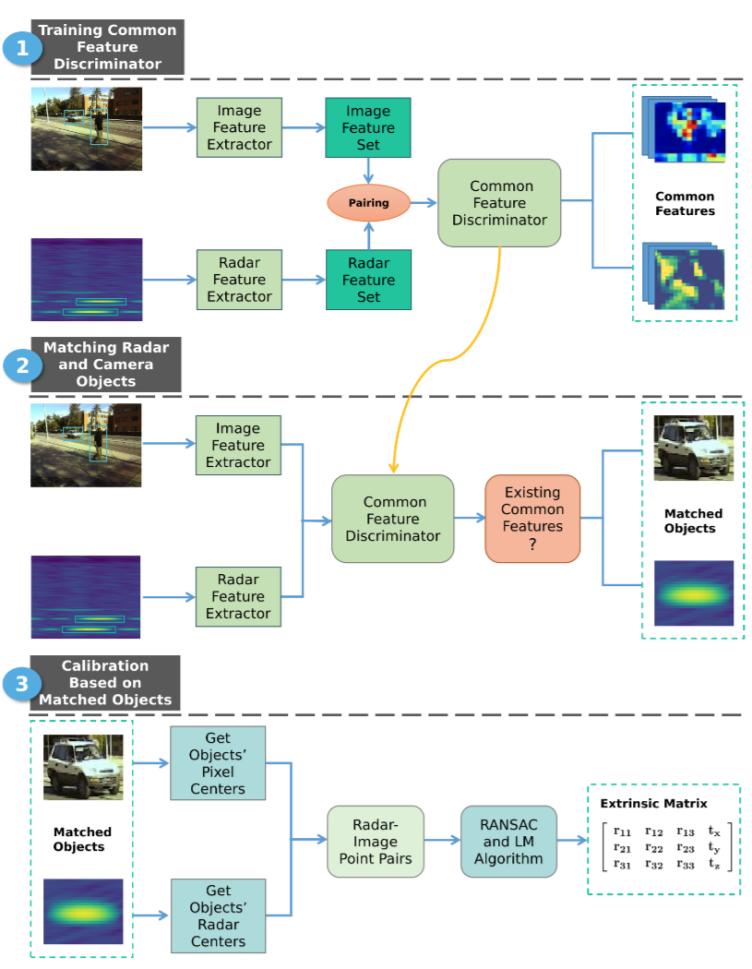

Sensor fusion is essential for autonomous driving and autonomous robots, and radar-camera fusion systems have gained popularity due to their complementary sensing capabilities. However, accurate calibration between these two sensors is crucial to ensure effective fusion and improve overall system performance. Calibration involves intrinsic and extrinsic calibration, with the latter being particularly important for achieving accurate sensor fusion. Unfortunately, many target-based calibration methods require complex operating procedures and well-designed experimental conditions, posing challenges for researchers attempting to reproduce the results. To address this issue, we introduce a novel approach that leverages deep learning to extract a common feature from raw radar data (i.e., Range-Doppler-Angle data) and camera images. Instead of explicitly representing these common features, our method implicitly utilizes these common features to match identical objects from both data sources. Specifically, the extracted common feature serves as an example to demonstrate an online targetless calibration method between the radar and camera systems. The estimation of the extrinsic transformation matrix is achieved through this feature-based approach. To enhance the accuracy and robustness of the calibration, we apply the RANSAC and Levenberg-Marquardt (LM) nonlinear optimization algorithm for deriving the matrix. Papers

|

Object Tracking via Multimodal Sensor System

|

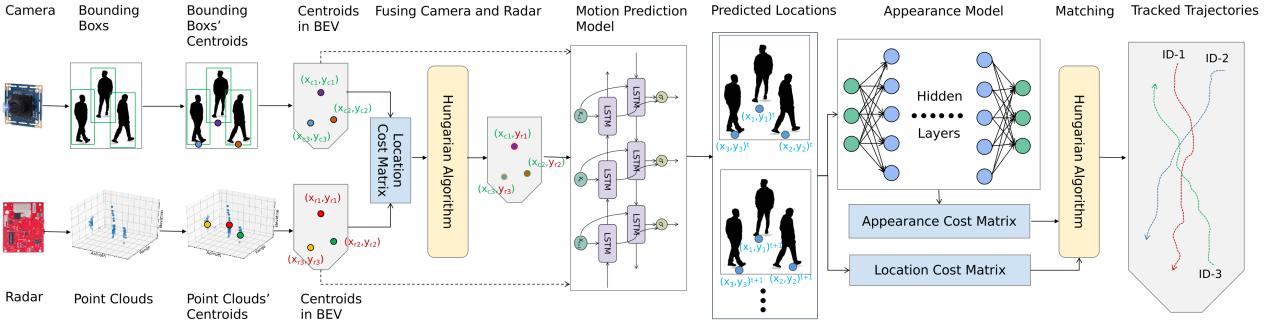

Autonomous driving holds great promise in addressing traffic safety concerns by leveraging artificial intelligence and sensor technology. Multi-Object Tracking plays a critical role in ensuring safer and more efficient navigation through complex traffic scenarios. This paper presents a novel deep learning-based method that integrates radar and camera data to enhance the accuracy and robustness of Multi-Object Tracking in autonomous driving systems. The proposed method leverages a Bi-directional Long Short-Term Memory network to incorporate long-term temporal information and improve motion prediction. An appearance feature model inspired by FaceNet is used to establish associations between objects across different frames, ensuring consistent tracking. A tri-output mechanism is employed, consisting of individual outputs for radar and camera sensors and a fusion output, to provide robustness against sensor failures and produce accurate tracking results. Through extensive evaluations of real-world datasets, our approach demonstrates remarkable improvements in tracking accuracy, ensuring reliable performance even in low-visibility scenarios. Papers

|

Object Tracking via Multimodal Sensor System

|

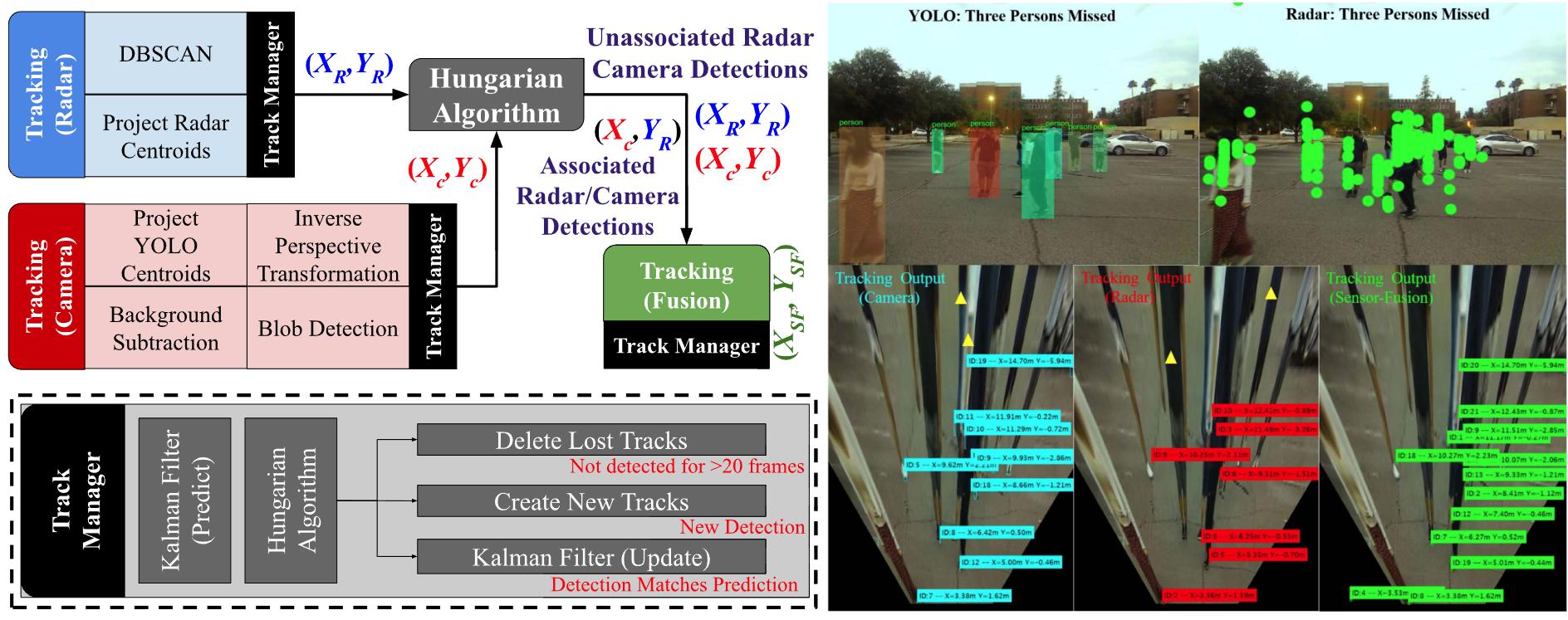

With the recent hike in the autonomous and automotive industries, sensor-fusion based perception has garnered significant attention for multi-object classification and tracking applications. Furthering our previous work on sensor-fusion based multi-object classification, this letter presents a robust tracking framework using a high-level monocular-camera and milli-meter wave radar sensor-fusion. The proposed method aims to a) improve the localization accuracy by leveraging radar's depth and camera's cross-range resolutions using decision-level sensor fusion; and b) make the system robust by continuously tracking objects despite single sensor failures using a tri-Kalman filter setup. The camera's intrinsic calibration parameters and the height of the sensor placement is used to estimate a birds-eye view of the scene, which in turn aids in estimating 2-D position of the targets from the camera. The radar and camera measurements in a given frame is associated using the Hungarian Algorithm. Finally, a tri-Kalman Filter based framework is used as the tracking approach. The proposed approach offers promising MOTA and MOTP metrics including significantly low missed detection rates that could aid large-scale and small-scale autonomous or robotics applications with safe perception. Papers

|

Automotive Radar Interference Mitigation

|

Interference among frequency modulated continues wave (FMCW) automotive radars can either increase the noise floor, which occurs in the most cases, or generate a ghost target in rare situations. To address the increment of noise floor due to interference, we proposed a low calculation cost method using adaptive noise canceller to increase the signal-to-interference ratio (SIR). As a result, both the simulation and experiment showed a good interference mitigation performance. Papers (related patent submitted)

|

Human Behavior Classification

|

To address potential gaps noted in patient monitoring in the hospital, a novel patient behavior detection system using mmWave radar and deep convolution neural network (CNN), which supports the simultaneous recognition of multiple patients’ behaviors in real-time, is proposed. The system was tested for real-time operation and obtained a very good inference accuracy when predicting each patient’s behavior in a two-patient scenario. Papers

Applied in SeVA Technology LLC |

Radar Imaging Technique

|

The recent effort shows that the movement of a single transceiver radar system can lead to a 3D imaging. A portable millimeter wave radar 3D imaging system is proposed. The range-doppler information can be extracted from the radar raw data, and a further inverse Radon transform can convert the relative velocity projection data and angle vectors into the linear Cartesian coordinators, i.e. azimuth and elevation. Papers

|

Research Support

I gratefully acknowledge ongoing and past support from sponsors for my research group.

|